a16z: The Metaverse Unlocks New Opportunities in Gaming Infrastructure

Author: James Gwertzman, a16z Games Partner

Compiled by: DeFi Path

You install the new parkour game that everyone is talking about, and your avatar immediately gains a new set of skills. A few minutes into the tutorial level, after climbing walls and jumping over obstacles, you’re ready for bigger challenges. You teleport yourself into your favorite game, Grand Theft Auto: Metaverse, following a tutorial set up by another player, and soon you’re leaping over car hoods, jumping from one rooftop to another. Wait… what’s that light below? Oh, an ultra-evolved Charizard! You pull out a Poké Ball from your pocket, capture it, and continue on your way…

This gaming scenario may not be possible today, but I believe it will happen in our future. I believe the concepts of composability (recycling, reusing, and recombining basic building blocks) and interoperability (allowing components from one game to work in another) are emerging in gaming, and they will fundamentally change how games are built and experienced.

Game developers will build faster because they won’t have to start from scratch every time. With the ability to try new things and take new risks, they will build more creatively. And there will be more, as the barriers to entry will be lower. The essence of gaming will expand to include these new "meta-experiences," which, like the example mentioned earlier, will function both within and outside of other games.

Of course, any discussion about "meta-experiences" will also spark discussions around another highly discussed idea: the metaverse. Indeed, many people view the metaverse as a carefully crafted game, but its potential is much greater than that. Ultimately, the metaverse represents everything about how we humans will interact and communicate online in the future. In my view, game creators who are based on gaming technology and follow game development processes will be key to unlocking the potential of the metaverse.

Why game creators? No other industry has such rich experience in building large-scale online worlds where hundreds of thousands (sometimes millions!) of online participants interact simultaneously. Modern games are no longer just about "playing"—they are as much about "trading," "skills," "streaming," or "purchasing." The metaverse adds more verbs to that list—think "working" or "loving." Just as microservices and cloud computing opened the floodgates of innovation in the tech industry, I believe the next generation of gaming technology will usher in a new wave of gaming innovation and creativity.

This is already happening in limited ways. Many games now support user-generated content (UGC), allowing players to build their own extensions for existing games. Some games, like Roblox and Fortnite, are so scalable that they have even called themselves metaverses. However, the current generation of gaming technology is still primarily built for single-player experiences, which can only take us so far.

This revolution will require innovation across the entire tech stack, from production pipelines and creative tools to game engines and multiplayer networks, as well as analytics and real-time services.

This article outlines my vision for the phase of gaming transformation and then dissects the new areas of innovation needed to unlock this new era.

Upcoming Gaming Transformation

For a long time, games have primarily been singular, fixed experiences. Developers would build them, release them, and then start building sequels. Players would buy them, play them, and then move on to other games once they had completed all the content—typically taking only 10 to 20 hours of gameplay to finish.

We are now in the era of games as a service, where developers continuously update their games after release. Many of these games also feature UGC adjacent to the virtual world, such as virtual concerts and educational content. Roblox and Minecraft even have marketplaces where player creators can earn money from their work.

However, it is crucial to note that these games still (purposefully) isolate themselves from one another. While their individual worlds may be vast, they are closed ecosystems with nothing transferable between them—resources, skills, content, or friends cannot be transferred.

So how do we break free from the legacy of walled gardens to unlock the potential of the metaverse? As composability and interoperability become important concepts for metaverse-aware game developers, we will need to rethink how we handle the following issues:

- Identity. In the metaverse, players will need a single identity that can be carried across games and platforms. Today’s platforms insist that players have their own player profiles, and players must painstakingly rebuild their profiles and reputations from scratch in every new game they play.

- Friendships. Similarly, today’s games maintain separate friend lists—or at best, use Facebook as a de facto source. Ideally, your friend network would track you across games, making it easier to find friends to play with and share competitive leaderboard information.

- Property. Currently, items you acquire in one game cannot be transferred to another game or used in another game—there are good reasons for this. Allowing players to bring modern assault rifles into a medieval game might be temporarily satisfying, but it would quickly ruin the game. However, with appropriate limits and restrictions, cross-game exchanges of (some) items could open up new creativity and emergent gameplay.

- Gameplay. Today’s games are closely tied to gameplay. For example, the entire fun of platformer genres, like Super Mario Odyssey, is about mastering the virtual world. But by opening up games and allowing "remixing" of elements, players can more easily "remix" new experiences and explore their own "hypothetical" narratives.

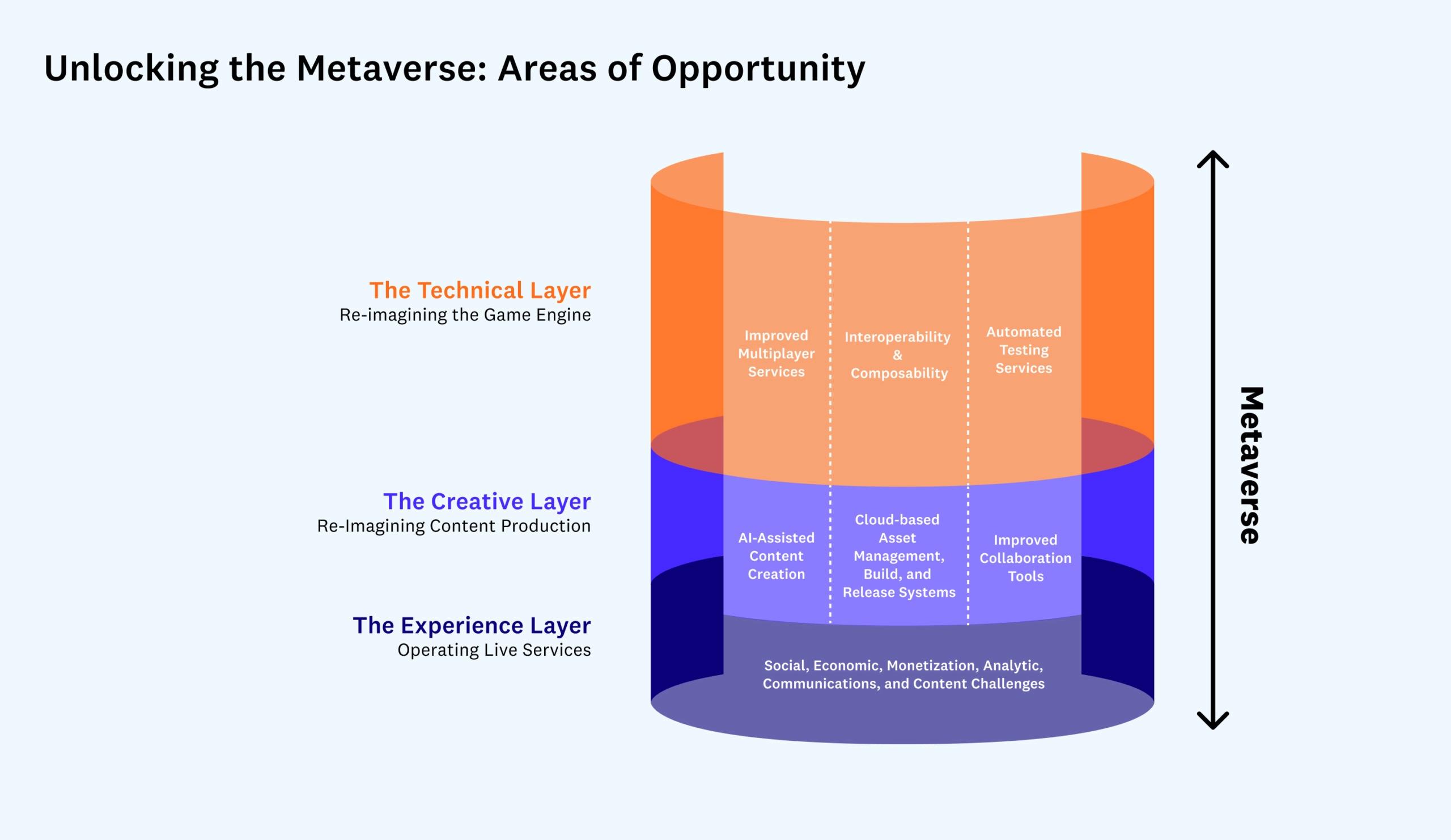

I see these changes happening at three clear levels of game development: the technical layer (game engines), the creative layer (content creation), and the experiential layer (live operations). At each layer, there are clear opportunities for innovation, which I will outline below.

Note: Making games is a complex process involving many steps. More so than other art forms, it is highly nonlinear and requires frequent cycles and iterations because, no matter how interesting it sounds on paper, you won’t know if it’s actually fun until you play it. In this sense, it’s more like choreographing a new dance, where the real work happens in the studio with the dancers repeatedly.

The following expandable section outlines the game development process for those who may not be familiar with its unique complexities.

Technical Layer: Rethinking Game Engines

At the core of most modern game development is the game engine, which powers the player experience and makes it easier for teams to build new games. Popular engines like Unity or Unreal provide common functionalities that can be reused in games, freeing up game creators to build unique aspects of their games. This not only saves time and money but also creates a level playing field that allows smaller teams to compete with larger ones.

That said, the fundamental role of game engines relative to other parts of games hasn’t really changed in the past 20 years. While engines have increased the number of services they provide—from merely graphics rendering and audio playback to multiplayer and social services, as well as post-launch analytics and in-game advertising—these engines still primarily serve as code libraries, completely encapsulated by each game.

However, when considering the metaverse, engines play a more significant role. To break down the walls that separate one game or experience from another, games may need to be encapsulated and hosted within engines, rather than the other way around. In this expanded view, engines become platforms, and the communication between these engines will largely define what I consider a shared metaverse.

Take Roblox as an example. The Roblox platform provides the same key services as Unity or Unreal, including graphics rendering, audio playback, physics, and multiplayer gaming. However, it also offers other unique services, such as player avatars and identities that can be shared in its game directory; extended social services, including shared friend lists; robust safety features that help keep the community safe; and tools and asset libraries to help players create new games.

However, Roblox as a metaverse still falls short because it is a walled garden. While there is some limited sharing between games on the Roblox platform, there is no sharing or interoperability between Roblox and other game engines or platforms.

To fully unlock the metaverse, game engine developers must innovate in 1) interoperability and composability, 2) improved multiplayer services, and 3) automated testing services.

Interoperability and Composability

For example, to unlock the metaverse and allow Pokémon hunting in the Grand Theft Auto universe, these virtual worlds will require unprecedented levels of collaboration and interoperability. While it is possible for one company to control a universal platform that powers a global metaverse, this is neither desirable nor likely. Instead, it is more likely that decentralized game engine platforms will emerge.

Of course, I cannot discuss decentralized technology without mentioning web3. Web3 refers to a set of blockchain-based technologies that decentralize ownership by transferring control of key networks and services to users/developers through smart contracts. In particular, concepts like composability and interoperability in web3 help address some of the core issues faced in the move toward the metaverse, especially around identity and property, and significant research and development is going into core web3 infrastructure.

That said, while I believe web3 will be a key component in rethinking game engines, it is not a silver bullet.

The most obvious application of web3 technology in the metaverse may be allowing users to purchase and own items within the metaverse, such as virtual real estate plots or clothing for digital avatars. Since transactions written to the blockchain are a matter of public record, purchasing an item as a non-fungible token (NFT) theoretically allows one to own an item and use it across multiple metaverse platforms and several other applications.

However, I believe this will not happen in practice until the following issues are resolved:

- A single user identity that allows players to move between virtual worlds or games with a consistent identity. This is necessary for everything from matchmaking to content ownership to preventing trolls. One service attempting to address this issue is Hellō. They are a multi-stakeholder cooperative organization aiming to transform personal identity through a user-centric identity vision, primarily based on web2 centralized identities. Others are using web3 decentralized identity models, such as Spruce, which enables users to control their digital identity through wallet keys. Meanwhile, Sismo is a modular protocol that uses zero-knowledge proofs for decentralized identity management, among other things.

- A universal content format that allows content to be shared between engines. Today, each engine has its own proprietary format, which is necessary for performance. However, to exchange content between engines, a standard open format is needed. One such standard format is Pixar's Universal Scene Description; another is NVIDIA's Omniverse. However, all content types need standards.

- Cloud-based content storage so that content required by one game can be located and accessed by others. Today, content required by games is often either packaged with the game as part of the release bundle or delivered for download over the internet (and accelerated via content delivery networks or CDNs). For content to be shared between worlds, there needs to be a standard way to locate and retrieve this content.

- Shared payment mechanisms so that metaverse owners have financial incentives to allow assets to transfer between metaverses. The sale of digital assets is one of the primary ways platform owners get compensated, especially under the "free-to-play" business model. Therefore, to incentivize platform owners to loosen control, asset owners could pay a "bottle fee" for the privilege of using their assets in the platform. Alternatively, if the asset in question is well-known, the metaverse may also be willing to pay asset owners to bring their assets into their world.

- Standardized functionalities so that the metaverse can understand how a given item is to be used. If I want to bring my fancy sword into your game and use it to slay monsters, your game needs to know that it’s a sword—not just a pretty stick. One way to address this issue is to attempt to create a taxonomy of standard object interfaces that each metaverse can choose to support or not. Categories might include weapons, vehicles, clothing, or furniture.

- Negotiated appearances and behaviors so that content assets can change their appearance to match the world they are entering. For example, if I have a high-tech sports car that I want to drive into your very strict steampunk-themed world, my car may need to transform into a steam-powered off-road vehicle to be allowed in. This could be the responsibility of my asset to know what to do, or the metaverse world I am entering has the responsibility to provide alternative appearances and behaviors.

Improving Multiplayer Systems

One major area of focus is the importance of multiplayer and social features. Increasingly, more games today are multiplayer because games with social features significantly outperform single-player games. Because, by definition, the metaverse will be entirely social, it will be affected by a variety of issues unique to online experiences. Social games must worry about harassment and toxicity; they are also more susceptible to DDoS attacks that lead to player loss and often must run servers in data centers around the globe to minimize player latency and provide the best player experience.

Given the importance of multiplayer features to modern games, there is still a lack of fully competitive off-the-shelf solutions. Engines like Unreal or Roblox and solutions like Photon or PlayFab provide these fundamentals, but developers must fill in the gaps themselves for advanced matchmaking and other features.

Innovations in multiplayer systems could include:

- Serverless multiplayer gaming, where developers can implement authoritative game logic and automatically host and scale in the cloud without worrying about launching actual game servers.

- Advanced matchmaking that helps players quickly find other players of similar skill levels for competition, including AI tools that help determine player skills and rankings. In the metaverse, this becomes even more important as "matchmaking" becomes so broad (for example, "find me another person to practice Spanish with," rather than just "find me a group of players to raid a dungeon").

- Anti-toxicity and anti-harassment tools that help identify and weed out toxic players. Any company hosting a metaverse will need to proactively worry about this, as online citizens will not spend time in unsafe spaces—especially if they can jump to another world with all their identities and assets intact.

- Guilds or clans that help players band together to compete against other groups or simply to have more social sharing experiences. Virtual worlds are equally full of opportunities for players to unite with others to pursue common goals, creating opportunities for services like creating or managing guilds, as well as syncing with external community tools like Discord.

Automated Testing Services

Testing is a costly bottleneck when releasing any online experience, as a small group of game testers must repeatedly experience the game to ensure everything works as expected without bugs or glitches.

Skipping this step is risky for games. Consider the recently launched highly anticipated game Cyberpunk 2077, which was heavily criticized by players for the numerous bugs that emerged after its release. However, because the metaverse is essentially an "open-world" game without fixed routes, testing can become very expensive. One way to alleviate this bottleneck is to develop automated testing tools, such as AI agents that can play the game like a player, looking for bugs, crashes, or errors. A side benefit of this technology is reliable AI players that can substitute for real players who unexpectedly drop out of multiplayer matches and provide early multiplayer "match liquidity" to reduce the time players have to wait to start matches.

Innovations in automated testing services could include:

- Automatically training new agents by observing real players interacting with the world. One benefit of this is that as the virtual world runs longer, the agents will continue to become smarter and more reliable.

- Automatically identifying bugs or errors, as well as deep linking directly to the error points so that human testers can reproduce the issues and work to resolve them.

- Swapping AI agents for real players so that if one player suddenly drops out of a multiplayer experience, the experience for other players does not end. This feature also raises interesting questions, such as whether players can "tag" to AI opponents at any time or even "train" their own substitutes to compete on their behalf. Will "AI-assisted" become a new category of competition?

Creative Layer: Rethinking Content Creation

As 3D rendering technology becomes more powerful, the amount of digital content required to create games continues to grow. Consider the latest Forza Horizon 5 racing game—this is the largest Forza download pack ever, requiring over 100 GB of disk space, while Horizon 4 required 60 GB. This is just the tip of the iceberg. The original "source art files," which are created by artists and used to create the final game, can be many times larger. The asset growth is due to the increasing scale and quality of these virtual worlds, with higher levels of detail and fidelity.

Now consider the metaverse. As more experiences shift from the physical world to the digital world, the demand for high-quality digital content will continue to increase.

This has already happened in the film and television industry. The recent Disney+ show The Mandalorian opened new frontiers by filming on "virtual sets" running in the Unreal game engine. This is revolutionary because it reduces production time and costs while increasing the scope and quality of the final product. In the future, I hope to see more and more works filmed in this way.

Additionally, unlike physical film sets that are often destroyed after filming due to high storage costs of keeping them intact, digital sets can be easily stored for future reuse. In fact, it makes sense to invest more rather than less money and build a fully realized world that can later be reused to generate fully interactive experiences. I hope that in the future, we will see these worlds available for other creators to use to create new content within these fictional realities, further advancing the metaverse.

Now consider how this content is created. It is increasingly being produced by artists distributed around the world. One of the lasting impacts of COVID-19 is the permanent push for remote development, with teams spread across the globe, often working from home. The benefits of remote development are clear—being able to hire talent from anywhere—but the costs are high, including challenges in creative collaboration, synchronizing the vast number of assets required to build modern games, and maintaining the security of intellectual property.

Given these challenges, I believe there will be three major areas of innovation in digital content creation: 1) AI-assisted content creation tools, 2) cloud-based asset management, building, and publishing systems, and 3) collaborative content generation.

AI-Assisted Content Creation

Today, almost all digital content is still manually constructed, which increases the time and costs required to release modern games. Some games have experimented with "procedural content generation," where algorithms can help generate new dungeons or worlds, but building these algorithms can be very challenging.

However, a new wave of AI-assisted tools is on the horizon that will be able to help both artists and non-artists create content faster and at higher quality, reducing content production costs and democratizing game task production.

This is particularly important for the metaverse, as almost everyone will be expected to be a creator—but not everyone can create world-class art. By art, I mean the entire category of digital assets, including virtual worlds, interactive characters, music, sound effects, and more.

Innovations in AI-assisted content creation will include conversion tools that can transform photos, videos, and other real-world artifacts into digital assets, such as 3D models, textures, and animations. Examples include Kinetix, which can create animations from videos; Luma Labs, which creates 3D models from photos; and COLMAP, which can create navigable 3D spaces from static photos.

There will also be innovations in creative assistants that take guidance from artists and iteratively create new assets. For example, Hypothetic can generate 3D models from hand-drawn sketches. Inworld.ai and Charisma.ai both use AI to create believable characters that players can interact with. DALL-E can generate images from natural language inputs.

An important aspect of using AI-assisted content creation in game creation is repeatability. Since creators must often return and make changes, simply storing the output of AI tools is not enough. Game creators must store the entire instruction set that created that asset so that artists can return later to make changes or duplicate the asset and modify it for new purposes.

Cloud-Based Asset Management, Building, and Publishing Systems

One of the biggest challenges game studios face when building modern video games is managing all the content required to create engaging experiences. Today, this is a relatively unsolved problem, with no standardized solutions; each studio must piece together its own solution.

To understand why this is such a difficult problem, consider the vast amounts of data involved. Large games may require millions of different types of files, including textures, models, characters, animations, levels, visual effects, sound effects, recorded dialogue, and music.

Each of these files will be changed repeatedly during production, and it is necessary to keep copies of each variant in case creators need to revert to earlier versions. Today, artists often meet this need by simply renaming files (e.g., "forest-ogre-2.2.1"), leading to file bloat. And due to the nature of these files, this takes up a lot of storage space, as they are often large and difficult to compress, and each revision must be stored separately. This is different from source code, where only the changes to each revision itself can be stored, which is very efficient. This is because, for many content files, such as artwork, even changing a small part of an image can change almost the entire file.

Moreover, these files do not exist in isolation. They are part of an overall process often referred to as the content pipeline, which describes how all these individual content files come together to create a playable game. In this process, "source art" files created by artists are transformed through a series of intermediate files into "game assets" for use by the game engine.

Today's pipelines are not very smart and often do not know about the dependencies that exist between assets. For example, the pipeline often does not know about the specific texture of the 3D basket held by a specific farmer character residing in that level. Therefore, whenever any asset is changed, the entire pipeline must be rebuilt to ensure that all changes are cleared and merged. This is a time-consuming process that can take hours or longer, slowing down the pace of creative iteration.

The demands of the metaverse will exacerbate these existing problems and create some new ones. For example, the metaverse will be vast—larger than today’s largest games—so all existing content storage issues apply to the metaverse. Additionally, the metaverse's "always-on" nature means that new content needs to be streamed directly into the game engine; it is impossible to "stop" the metaverse to create new builds. The metaverse needs to be able to update itself instantly. To achieve composability goals, remote and distributed creators will need access to source assets, the ability to create their own derivatives, and methods to share them with others.

Meeting these demands of virtual worlds will create two major opportunities for innovation. First, artists need an asset management system that is easy to use and similar to GitHub, providing them with the same level of version control and collaboration tools that developers currently enjoy. Such a system needs to integrate with all popular creative tools, such as Photoshop, Blender, and Sound Forge. Mudstack is an example of a company currently focused on this area.

Second, there is still much work to be done in automating content pipelines, which can modernize and standardize the art pipeline. This includes exporting source assets to intermediate formats and building those intermediate formats into game-ready assets. Smart pipelines will understand dependency graphs and be able to perform incremental builds so that when assets change, only those files with downstream dependencies will be rebuilt—greatly reducing the time required to view new content in the game.

Improving Collaborative Tools

Despite the distributed, collaborative nature of modern game studios, many of the specialized tools used in the game development process are still centralized, single-creator tools. For example, by default, Unity and Unreal level editors only support one designer editing a level at a time. This slows down the creative process, as teams cannot work in parallel on a single world.

On the other hand, both Minecraft and Roblox support collaborative editing; this is one of the reasons these consumer platforms are so popular, despite lacking other specialized features. Once you’ve seen a group of kids build a city together in Minecraft, it’s hard to imagine wanting to build any other way. I believe collaboration will be a fundamental feature of the metaverse, allowing creators to come together online to build and test their work.

Overall, collaboration in game development will become real-time in nearly every aspect of the game creation process. Some ways that collaboration may evolve in the metaverse include:

- Real-time collaborative world building, allowing multiple level designers or "world builders" to edit the same physical environment simultaneously and see each other’s changes in real-time, with full version control and change tracking. Ideally, level designers should be able to seamlessly switch between play and edit modes for the fastest iteration. Some studios are experimenting with proprietary tools (like Ubisoft’s AnvilNext game engine) for this, while Unreal has attempted to introduce real-time collaboration as a beta feature originally built to support TV and film production.

- Real-time content review and approval, allowing teams to experience and discuss their work together. Group discussions have always been a key part of the creative process. For a long time, films have had "dailies," where production teams can collectively review the work done each day. Most game studios have a large room with a big screen for group discussions. However, tools for remote development are much weaker. The fidelity of screen sharing in tools like Zoom is not high enough to accurately view digital worlds. One solution for games might be a "spectator mode," where the entire team can log in and see through a single player’s eyes. Another would be to improve the quality of screen sharing for higher fidelity with less compression, including faster frame rates, stereo sound, more accurate color matching, and the ability to pause and annotate. Such tools should also integrate with task tracking and assignment. Companies attempting to solve this for film include frame.io and sohonet.

- Real-time world adjustments, allowing designers to tweak any of the thousands of parameters that define modern games or virtual worlds and immediately experience the results. This adjustment process is crucial for creating engaging, balanced experiences, but often these numbers are hidden in spreadsheets or configuration files that are difficult to edit and cannot be adjusted in real-time. Some game studios have attempted to use Google Sheets for this, allowing changes to configuration values to be pushed immediately to game servers to update how the game behaves, but the metaverse will need something more powerful. Another benefit of this functionality is that these same parameters could also be modified for live events or new content updates, making it easier for non-programmers to create new content. For example, a designer could create a special event dungeon and place monsters that are harder to defeat than usual, but these monsters drop unusually generous rewards.

- A fully cloud-based virtual game studio where members of the game creator team (artists, programmers, designers, etc.) can log in from anywhere on any type of device (including low-end PCs or tablets) and have instant access to high-end game development platforms and a complete library of game resources. Remote desktop tools like Parsec may play a role here, but this is not just about remote desktop capabilities; it also includes how creative tools are licensed and how assets are managed.

Experiential Layer: Rethinking Live Operations for Real-Time Services

The final layer of restructuring the metaverse involves creating the necessary tools and services to actually operate the metaverse itself, which can be argued is the most challenging part. Building an immersive world is one thing; running it with millions of players globally and 24/7 is another.

Developers must contend with:

- The social challenges of operating any large municipal authority, which may be filled with citizens who do not always get along and require dispute resolution.

- The economic challenges of effectively running a central bank, which has the ability to mint new currency and monitor the sources and influx of currency to control inflation and deflation.

- The monetization challenges faced by modern e-commerce sites, which have thousands or even millions of items for sale, along with the associated needs for promotions, discounts, and global marketing tools.

- The analytical challenges of staying informed about what is happening in their vast world in real-time so they can quickly get alerts before issues escalate out of control.

- The challenges of communicating in any language, whether individually or collectively, with their digital constituents, given that the metaverse (in principle) is global.

- The frequent content update challenges necessary to keep their metaverse growing and evolving.

To address all these challenges, companies need well-equipped teams that have access to a wide range of backend infrastructure, as well as the necessary dashboards and tools to allow them to operate these services at scale. Two particularly mature areas of innovation are LiveOps services and in-game commerce.

LiveOps as a field is still in its infancy. Commercial tools like PlayFab, Dive, Beamable, and Lootlocker have only implemented parts of a complete LiveOps solution. As a result, most games still feel compelled to implement their own LiveOps stacks. The ideal solution would include: a real-time event calendar capable of scheduling events, predicting events, and creating event templates or copying previous events; personalization, including player segmentation, targeted promotions, and discounts; messaging, including push notifications, emails, and in-game inboxes, as well as translation tools to communicate with users in their own language; notification creation tools so that non-programmers can create in-game pop-ups and notifications; and testing to simulate upcoming events or new content updates, including mechanisms to roll back changes when issues arise.

More developed but still needing innovation is in-game commerce. Given that nearly 80% of digital game revenue comes from selling items or microtransactions in free-to-play games, it is notable that there are no better off-the-shelf solutions for managing in-game economies—Shopify for the metaverse.

The existing solutions today only address parts of the problem. The ideal solution needs to include an item catalog with arbitrary metadata for each item; an app store interface for real-money sales; promotions and discounts, including limited-time offers and targeted discounts; reporting and analytics, with targeted reports and charts; user-generated content, such as games being able to sell their player-created content and return a percentage of revenue to those players; advanced economic systems, such as item crafting (combining two items into a third), auction houses (so players can sell items to each other), trading, and gifting; and complete integration with the web3 world and blockchain.

Next Steps: Transforming Game Development Teams

In this article, I shared a vision of how games will transform as new technologies unlock composability and interoperability between games. I hope others in the gaming community share my excitement for the upcoming potential and feel inspired to join me in building the new companies needed to drive this revolution.

The upcoming wave of change will not only provide opportunities for new software tools and protocols. It will change the very nature of game studios as the industry moves away from monolithic single studios and becomes more specialized at new levels.

In fact, I believe that in the future, we will see greater specialization in the game creation process. I also believe we will see:

- World builders specializing in creating playable worlds that are both believable and wondrous, filled with creatures and characters suited to that world. Think of the incredibly detailed Wild West version created for Red Dead Redemption. Rather than investing so much in that world for one game, why not reuse that world to create many games? Why not continue to invest in that world, allowing it to grow and evolve over time to meet the needs of these games?

- Narrative designers crafting engaging interactive narratives within these worlds, filled with plots, puzzles, and quests for players to discover and enjoy.

- Experience creators focusing on gameplay, reward mechanisms, and control schemes to craft playable experiences that span worlds. As more companies attempt to bring parts of their existing businesses into the virtual world, creators who can bridge the gap between the real and virtual worlds will be particularly valuable in the coming years.

- Platform builders providing the foundational technology needed for the aforementioned experts to complete their work.

My team and I at a16z Games are excited to invest in this future, and I can’t wait to see the changes that will unleash incredible levels of creativity and innovation in our industry. Gaming is already the largest single sector in the entertainment industry, but as more economic sectors enter the web and the virtual world, gaming is poised to become even larger.

We haven’t even touched on some of the other exciting advancements on the horizon, such as Apple’s new augmented reality headset or Meta’s recently announced new VR prototype, or the introduction of 3D technology into web browsers with WebGPU.

Now is truly the best time to become a creator.

Popular articles