Talkie: Strip away modern knowledge and re-examine the foundational capabilities of AI

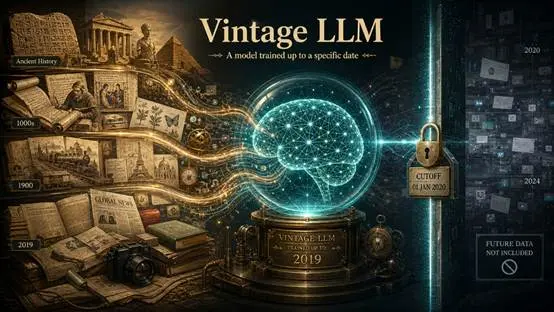

Recently, a retro language model named Talkie has sparked discussions in the AI community. Its core feature lies in the extremely limited training data. This model, with 13B parameters and trained on 260 billion tokens, primarily reads English texts from before 1931, including old books, newspapers, journals, scientific papers, patents, and encyclopedias. It is officially defined as a Vintage Language Model.

In an era pursuing larger context, broader knowledge coverage, and real-time updates, Talkie's setup seems unusual.

Modern large models are typically trained on contemporary internet corpora. They are familiar with Python code, GitHub issues, and today's social media contexts. In contrast, Talkie resembles a research subject locked within the knowledge boundaries of the 1930s. It has never encountered the post-World War II world and has not truly engaged with the internet, cryptocurrency, or modern software engineering. This detachment from modern knowledge makes it an experimental sample for observing the foundational capabilities of models.

Deconstruction of Knowledge and Foundational Abilities

Through Talkie, researchers can observe an essential question: If a model has not seen the modern world, how much can it still learn from language structures and contextual examples?

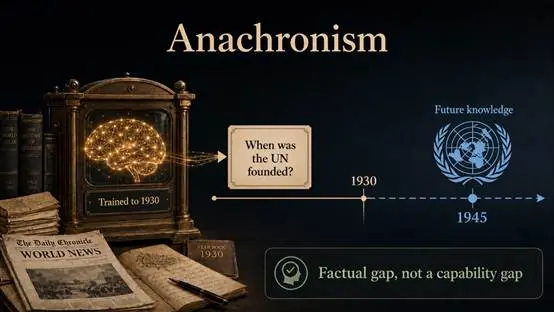

In the evaluation of modern models, logical reasoning is often mixed with data recall. When a model answers questions about Python code or modern political topics correctly, it is difficult to discern whether it genuinely possesses foundational abilities or if it simply happened to have relevant test questions in its training data. Talkie distinguishes between the two:

- Anachronism: If it does not know "when the United Nations was founded," it does not mean its language comprehension ability is poor, because the concept of the United Nations did not exist before 1930. This is a case of knowledge absence, not ability absence.

- Pattern Generalization: Researchers found that although Talkie has never seen Python, it can derive very simple code logic through a few few-shot examples using language structures. This demonstrates that the Transformer architecture itself possesses basic generalization capabilities, rather than relying solely on memory.

"Twin" Control Experiment

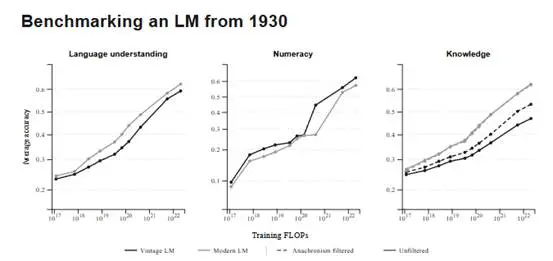

To verify the impact of data distribution, the research team created a structurally identical Modern Twin, differing only in that the latter was trained on modern web data FineWeb.

Initially, Talkie lagged significantly behind in standard tests, but this was not fair for a model lacking knowledge post-1930. Interestingly, when researchers filtered out questions that would be considered "future" from a 1930 perspective, the performance gap between Talkie and modern models narrowed by about half.

This indicates that the foundational abilities of language understanding do not entirely depend on the modern internet. High-quality old newspapers, scientific journals, and legal documents already contain the accumulated patterns of common sense, logical causality, and argumentative rules. What the model learns from these texts is how concepts connect and how evidence supports judgments; these underlying logics have a high degree of stability across different eras.

Inspiration for Design Principles

For AI systems focused on traceability, verification, and reasoning paths, Talkie points out a useful design principle: The model itself does not need to compress every new fact into its parameters.

A more robust approach should be: the foundational model focuses on stable language abilities, reasoning structures, and general patterns. These abilities can be learned from high-quality, high-density historical texts. The latest facts, technical specifications, and real-time information should be handled by retrieval systems, knowledge bases, and tool calls.

Let the model be responsible for understanding and deconstructing, while external systems handle updating and execution. This is closer to the actual needs of production environments than simply pursuing larger pre-training corpora and turning the model into a memory-dependent "problem solver."

Conclusion

The value of Talkie lies in its clear delineation of experimental boundaries. It reminds the industry: do not equate "new data" directly with "new capabilities," nor should "knowledge coverage" be equated directly with "foundational abilities."

The evolutionary logic of AI is shifting: rather than endlessly piling up web data, systems that can distill logical frameworks from curated corpora and excel at leveraging external knowledge bases to solve practical problems are more aligned with the core of competition in the next stage.