Codatta's Purpose: Building the Knowledge Protocol Layer for AI

Understanding the Data Foundation of AI

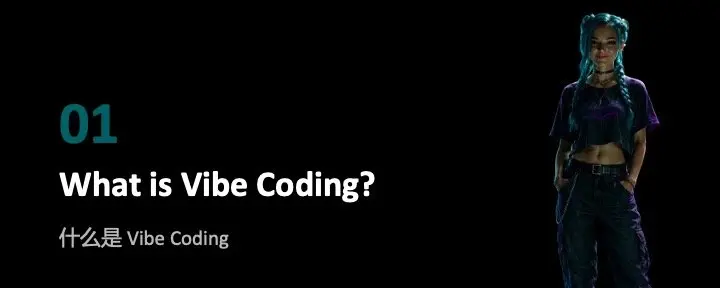

Figure 1: Classical Software System (Human-driven) vs. AI System (Data-driven)

AI models learn to recognize patterns, reason, and solve new problems through data. Unlike traditional software that relies on explicit rules, generative AI (large language models) drives AI systems through vast datasets and input-output samples.

Based on industry practice, about 80% of AI engineering investment is concentrated on the data aspect— including pipeline construction, cleaning, and preprocessing—rather than algorithm development. High-quality, high-knowledge-density data is crucial. With the development of large language models, the demand for specialized knowledge and reasoning data has significantly increased, while the need for basic annotations has decreased as foundational model capabilities improve.

The Era of Generative AI: The Evolution of Data's Role

Figure 2: AI Model Development Stages: From Foundational to Vertical AI

In the era of generative AI, the role of data is undergoing a fundamental transformation. The importance of traditional annotated data is declining, while the demand for high-quality, high-knowledge-density data is experiencing explosive growth. The training of AI models is typically divided into three stages: first, pre-training based on internet data to establish foundational cognitive abilities; second, fine-tuning using manually annotated preference data to optimize interaction experiences; and finally, generating synthetic data through reinforcement learning to enhance model generalization capabilities.

However, research from journals like Nature indicates that synthetic data has significant limitations. Overuse can lead to "model collapse," severely affecting output quality. This highlights the critical value of real data. As foundational AI capabilities improve, specialized applications increasingly rely on high-quality knowledge data provided by human experts. This high-quality data generated by humans remains indispensable in key processes such as model fine-tuning and performance evaluation.

Reshaping the AI Data Ecosystem with Royalty Incentives

AI developers (especially startups) face high initial costs when acquiring high-quality specialized knowledge data. Traditional procurement methods require substantial upfront investment, making it difficult to obtain critical human intelligence data, thereby delaying AI innovation.

Domain experts provide essential knowledge for AI systems, and their insights may enable AI to replace their own jobs. However, they often receive only one-time compensation, which is frequently inadequate. This misalignment of incentives not only dampens expert enthusiasm but also raises fairness issues regarding the distribution of AI benefits.

Figure-3 Comparing GenAI Business Models

Codatta addresses this issue through a blockchain-based data assetization royalty payment model. This solution eliminates the upfront payment barrier for developers, allowing them to obtain high-quality data through revenue sharing. By linking compensation to long-term earnings, Codatta reduces innovation barriers while building a sustainable incentive system for experts.

Data contributors will gain shared ownership and continuously receive royalties from AI applications that use their data—this model is akin to investing in AI startups. Given the unique attributes of such assets and their potential for value creation, related rights can also achieve liquidity through trading, flexibly meeting revenue realization needs. This revenue mechanism, which grows in tandem with data influence, truly reflects the value of specialized knowledge and far exceeds the fairness of traditional one-time buyout models.

From Data to Assets: On-Chain Royalty Payment Practices

Figure-4: Codatta's Data Assetification Framework

This diagram illustrates the core mechanism of Codatta's data assetization and royalty distribution. On the left, data contributions (X, Y, and knowledge points KP0, KP1, KP2, KVO, KV1) are submitted to the chain along with content hash values, while encrypted data payloads are stored in a hybrid storage solution. On the right, specialized AI models utilize this data to provide inferences to clients. The important "data ownership" module tracks value contributions, allowing for fair royalty distribution based on usage and impact.

To achieve data assetization, Codatta has built three core pillars: infrastructure, community, and incentive mechanism design:

- Privacy-Protecting Transparency:

Our system records all data contributions on the blockchain, creating a permanent verifiable record of source, ownership, and attribution. All data assets are encrypted and stored (supporting a hybrid decentralized and centralized architecture), ensuring fair recognition and royalty distribution while safeguarding commercial value. Codatta transforms knowledge into traceable, revenue-generating digital assets through smart contracts.

- Collaborative Network of Human Contributors and Professional AI:

We leverage both human experts and AI in a transparent, reputation-driven system. AI handles initial tasks (pursuing speed/scale), while humans optimize outputs with professional insights. This dual-layer approach is becoming the industry standard. Codatta further expands this model, allowing humans to play multiple roles: knowledge providers, validators, or funders. Each role is publicly visible and linked to a dynamic reputation system, encouraging quality and accountability.

- Programmable Incentive Modules:

Data interactions (collection, validation, improvement) are linked to customized rewards. Smart contracts automatically allocate royalties, reputation, or staked incentives, ensuring fair compensation based on data value. These modules employ valuation and attribution algorithms to analyze knowledge impact during training and inference. They can adapt to different data types, optimizing long-term fair compensation and promoting the development of a sustainable knowledge economy.

These three pillars—on-chain transparency through encrypted storage, human-AI hybrid networks, and programmable reward mechanisms—together form Codatta's data assetization framework. This system transforms knowledge contributions into secure, traceable digital assets, bridging human intelligence and scalable, sustainable AI development through the continuous generation of royalty income.

Open Design: Connecting Traditional AI with Decentralized Intelligence

Codatta is a flexible knowledge network that bridges decentralized AI (DeAI) with traditional Web2/Web3 human intelligence services. For traditional data annotation scenarios, Codatta can serve as a high-quality backend for platforms like MTurk/Scale AI, enabling traditional services to access its expert network for advanced knowledge data through support for fiat/stablecoin payments. This allows traditional platforms to enjoy blockchain-level verification and quality assurance without dealing with the complexities of Web3, achieving plug-and-play functionality.

In the DeAI tech stack, Codatta focuses on data curation—this critical initial step. We believe blockchain is best suited for contributor identity verification, data/model validation, provenance tracking, and usage monitoring in DeAI. Our design offloads heavy computational/storage tasks to centralized infrastructure to improve efficiency while ensuring transparency, accountability, and fair value distribution through decentralized systems. This hybrid approach maintains integrity while ensuring scalability, building a trustworthy AI data supply chain.

By connecting centralized and decentralized ecosystems, Codatta aims to create a fairer and higher-performing AI system—where human contributors are recognized, data integrity is protected, and incentive mechanisms align with long-term value creation.

Note: Codatta's journey began with the launch of the Microscope open-source project (in collaboration with Coinbase, Messari, and GoPlus) and has now evolved into a universal human intelligence platform for generative AI, aiming to become the foundational support for AI developers. Its flagship product, the Crypto Account Annotation System (CAA), has achieved: coverage of 35 blockchain networks, annotation of 46 million high-risk addresses, and completion of 560 million annotations (covering 95 categories, built collaboratively by over 100,000 contributors). Current business has expanded into evaluation, e-commerce, healthcare, and fitness, with a clear development roadmap: 2024: covering 100+ knowledge domains, gathering 300,000+ contributors; 2025: achieving complete protocol decentralization; 2026: completing comprehensive data assetization, making every knowledge contribution a revenue-generating asset.