A comprehensive guide to the next decade, the AI investment bible for genius youths: "Situational Awareness"

Author: @giantcutie666

In 2024, a post-00s teenage genius, Leopold Aschenbrenner, left OpenAI and started a fund.

At the same time, he wrote down his investment ideas:

Looking back now, this 165-page paper "Situational Awareness: The Decade Ahead" has become legendary.

Those who understood it last year must have achieved financial freedom this year!

I have placed the original 165-page English version "here", and below is my attempt at a condensed version:

https://situational-awareness.ai/wp-content/uploads/2024/06/situationalawareness.pdf

The main point of the paper is: within this decade, humanity will create AGI (capable of performing all tasks of an average person), followed closely by superintelligence.

Currently, fewer than a few hundred people understand this, most of whom are clustered in a few streets in San Francisco. Others—including Wall Street, the media, and Washington—have yet to catch on (some on Wall Street have started to realize it this year).

Part One: Why AGI Will Exist by 2027

The method is simple but effective: instead of predicting time, predict the scale of computing power.

In the past four years, from GPT-2 to GPT-4, the model's "effective computing power" has increased by about 100,000 times, growing tenfold every six months!

GPT-2 was like a kindergarten child struggling to write half-coherent sentences, while GPT-4 is like a smart high school student capable of passing the bar exam and tackling PhD-level scientific problems.

This 100,000-fold increase comes from three sources:

First, hardware investment. The computing power used for training has increased more than threefold each year. Moore's Law only increases by 1.3 times per year, while AI has accelerated fivefold due to human investment and the dedicated creation of AI chips.

Second, smarter algorithms. Achieving a certain mathematical capability today costs 1,000 times less in reasoning than it did two years ago, thanks to the efforts of algorithm engineers.

Third, unlocking default suppressed capabilities (unhobbling) is the most interesting aspect.

The model itself has many capabilities but is constrained by default settings. For example, the base model of ChatGPT was actually quite smart but produced nonsensical outputs—RLHF unlocked its potential.

Similarly, allowing the model to "think for a while before answering" (chain of thought) unlocked its reasoning ability. Providing it with tools and long-term memory unlocked further layers.

In the next four years (by 2027), the author predicts these three paths will increase by another 100,000 times.

The key insight lies in unhobbling:

Current models can only reason for "a few minutes" at a time—about a few hundred tokens. What if they could "think for months"—up to millions of tokens?

For instance, the gap between you and Einstein is not as significant as the gap between "you think for 5 minutes" and "you think for 5 months."

If this unlocking is achieved, it would represent an additional leap in intelligence of 3 to 4 orders of magnitude. This fruit is already visible.

Extrapolating from this, around 2027, a type of AI will emerge: capable of working independently like a remote employee for weeks, planning tasks, writing code, conducting experiments, fixing bugs, and submitting results.

It could completely replace an OpenAI researcher, which is how the author defines AGI.

The greatest uncertainty comes from the data wall—high-quality public internet data is being rapidly consumed.

Part Two: AGI Is Not the End, But the Explosive Point

What happens once AI can perform the work of AI researchers?

With computing power unchanged, you are no longer limited by "the few hundred researchers at OpenAI/Anthropic/DeepMind combined"—you can run 100 million copies of AI researchers, each operating at 100 times human speed.

Every few days, the output would equal a whole year's work of humans.

These automated researchers also have structural advantages: they read all ML papers, remember every experiment, share thoughts directly between copies, and training one means all are trained (no need for each new employee to slowly get up to speed).

This means that algorithmic progress that would normally take humans 10 years could be compressed into just one year.

Then this year would spawn an even smarter next-generation model, leading to another round of compression. The transition from AGI to superintelligence far exceeding human capabilities could happen in just a few months to a year or two.

The author acknowledges there are bottlenecks—such as experiments requiring computing power, and even with many copies, you still have to wait for GPUs. But he argues that bottlenecks will only slow down the explosion, not prevent it.

What Does Superintelligence Look Like

In terms of scale, it would be superhuman—hundreds of millions of copies running in parallel, instant integration across all disciplines, accumulating the equivalent of a thousand years of human experience in just weeks.

Qualitatively, it would surpass humans—like AlphaGo making move 37 (a move human experts couldn't conceive of for decades), but applied across all fields. It could identify code vulnerabilities that humans would never see in a lifetime and write code that humans could never understand.

We would be like elementary school students reading a PhD thesis.

The consequences would be: robotics would be solved (this is primarily an ML algorithm issue, not a hardware issue), synthetic biology weaponized, and swarms of stealth drones could preemptively destroy nuclear weapons.

The entire international military balance would be overturned within a few years.

Part Three: Four Truly Urgent Problems

Problem One: Trillion-Dollar Clusters

This competition is not just about coders writing code; it's industrial mobilization.

Each generation of models requires larger clusters, and larger clusters need power plants, which in turn require chip factories.

At the current pace: a 1GW cluster by 2026 (the power of one Hoover Dam), 10GW by 2028 (the power of a medium-sized U.S. state), and 100GW by 2030 (over 20% of all U.S. electricity generation).

Investment scale may exceed $1 trillion per year by 2027.

The real bottleneck is not money or GPUs, but electricity. "Where do I find 10GW?" is currently the hottest topic in SF.

To build this on U.S. soil, environmental approval must be relaxed, and federal powers must be used to unlock land—the author elevates this to a national security level.

If the U.S. cannot build it, the clusters will move to the UAE or Saudi Arabia, effectively handing the AGI key to other countries.

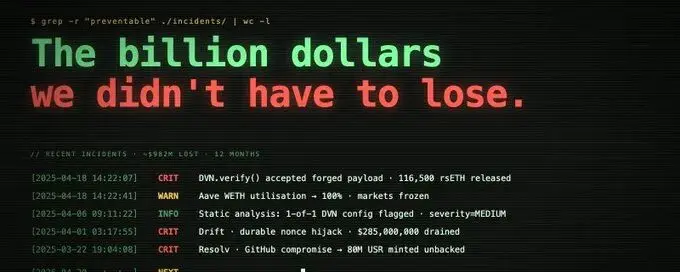

Problem Two: Labs Are Leaky

Current AI labs have safety levels equivalent to ordinary Silicon Valley startups.

Google DeepMind (the best in the industry) admits its defense capabilities against state-level adversaries are at level 0 (out of a maximum of 4).

What does this mean? The "weights" of AGI models are essentially just a large file on a server.

If this file is stolen, it would be like handing "automated AI researchers" directly to China—they could immediately run their own intelligence explosion, nullifying all U.S. advantages.

More urgently, algorithmic secrets—the key breakthroughs for the next generation paradigm (how to break the data wall)—are forming in the Slack channels and outside the desks of various companies in SF.

The author asserts: within the next 12 to 24 months, key AGI breakthroughs will leak to China. This is not a maybe; it is almost certain.

To achieve a security level that can withstand China's full force, hardware-level isolation, personnel background checks at the level of atomic bomb engineers, and specialized cluster designs are required—these will take at least a few years to iterate and establish. If action is not taken immediately, by 2027, when AGI is achieved, security will not keep up, leaving only two bad options: either directly hand it over to other countries or stop and wait for security to be built (potentially losing the lead).

Problem Three: Alignment Issues

Current alignment technology is called RLHF—humans score the model, and the model learns to please humans.

This method is effective compared to human-dumb AI. Once AI becomes several orders of magnitude smarter than humans, this method will completely fail—how do you evaluate a PhD thesis you cannot understand?

The intelligence explosion makes this issue extremely urgent:

In less than a year, we could jump from "RLHF still works" to "RLHF completely fails," with no time for iterative trial and error.

At the same time, we could jump from "small errors" (ChatGPT swearing) to "catastrophic errors" (superintelligence escaping from the cluster and hacking military systems).

The intermediate architecture will undergo multiple generations of evolution, and we will completely fail to understand how the final superintelligence thinks.

Current models reason using English tokens (relatively transparent), but in the future, they will likely evolve to reasoning using internal latent states—completely inexplicable.

The author is optimistic about technical solutions: there are many low-hanging fruits, and progress is being made in explainability research.

However, he is extremely pessimistic about organizational execution—there are no more than a few dozen serious people globally working on this, and labs show no signs of paying the price for safety. "We are overly reliant on luck."

Problem Four: The Free World Must Win

The author spends a lot of time arguing that some countries are not only still in the game but are very competitive.

In terms of computing power: Huawei's 7nm chips (produced by SMIC) perform at the level of Nvidia's A100, with a cost-performance ratio 2-3 times worse but still sufficient.

In terms of construction capability: China has added electricity equivalent to all of the U.S. generation capacity in the past decade. In terms of industrial mobilization to build a 100GW cluster, China may be stronger than the U.S.

Paths of theft: as long as U.S. labs are not secured, key algorithms will inevitably leak within two years.

There is a disturbing coincidence on the timeline: the AGI timeline (~2027) converges with the window for observing the CCP's potential attack on Taiwan (~2027). "The AGI endgame may unfold against the backdrop of a world war."

The author's conclusion: the U.S. must maintain a "healthy lead"—he suggests two years. This two-year buffer is meant to stabilize the situation and negotiate the international order for the superintelligence era with China.

Less than this lead, the entire world will be pushed into a "survival race through intelligence explosion"—all safety margins will be zeroed out, and everyone will be racing naked for speed.

Part Four: The Project (AGI Version of the Manhattan Project)

At this point, the author throws out the boldest prediction of the entire book.

"It is impossible for the U.S. government to have a SF startup develop superintelligence." Imagine using Uber to develop an atomic bomb back in the day.

Prediction: at some point in 2027 or 2028, the U.S. national security apparatus will take over the AGI project in some form. The form could be a defense contractor model (OpenAI becoming Lockheed Martin), a joint venture model (cloud providers + labs + government), or even more extreme nationalization.

Core researchers would move into SCIF secure facilities, and trillion-dollar clusters would be built at wartime speed.

The path would form: a first truly frightening demonstration of capability would emerge in 2026/27—possibly "helping novices create biological weapons," or AI autonomously hacking critical infrastructure.

As details of CCP infiltration into labs are revealed, the atmosphere in Washington would shift dramatically. "Do we need an AGI Manhattan Project?" would slowly, then suddenly become the number one topic.

Why must it be the government? Because superintelligence is akin to nuclear weapons, not internet technology. Private companies have never been allowed to hold complete nuclear weapons.

To achieve a security level that can withstand China's full force, only government infrastructure